Machines are more and more making necessary choices which have historically been made by people, equivalent to who ought to get a job interview or who ought to obtain a mortgage. For legitimate authorized, reputational, and technical causes, many organizations and regulators don’t absolutely belief machines to make these judgments by themselves. Because of this, people often stay concerned in AI choice making, which is known as a “human-in-the-loop.” For instance, within the detection of pores and skin most cancers, the method might now contain an AI machine reviewing {a photograph} of a mole and making a preliminary evaluation of most cancers threat, adopted by a dermatologist both confirming or rejecting that willpower.

For these varieties of selections, the place human security is in danger and there’s an objectively appropriate reply (i.e., whether or not a mole is cancerous or not), human overview of AI choices is acceptable, and certainly, could also be required by regulation. However little has been written about precisely when and the way people ought to overview AI choices, and the way that overview must be performed for choices with no objectively appropriate reply (e.g., who deserves a job interview). This text is an try to fill in a few of these gaps by proposing a framework for attaining optimum joint human-machine choice making that goes past assuming that human judgment ought to at all times prevail over machine choices.

Regulatory Necessities for Human Evaluation of AI Selections

Most rules that deal with machine choice making require people to overview machine choices that carry important dangers. For instance, Article 22 of the European Union’s Normal Information Safety Regulation gives that EU residents shouldn’t be topic to “solely automated” choices that considerably have an effect on them. The European Fee’s proposed AI Act equally gives that high-risk AI techniques must be designed and developed in order that they are often successfully overseen by pure individuals, together with by enabling people to intervene in or interrupt sure AI operations. In america, the Biden Administration’s just lately launched Blueprint for an AI Invoice of Rights (which isn’t binding however will possible be influential on any future U.S. AI rules) gives that AI techniques must be monitored by people as a test within the occasion that an automatic system fails or produces an error.

These and different AI rules require some degree of human oversight over autonomous choice making to catch what are known as “algorithmic errors,” that are errors made by machines. Such errors definitely do happen, however these rules are flawed to the extent that they suggest that each time a human and a machine make totally different choices or attain totally different conclusions, the machine is essentially mistaken and the human is essentially proper. As mentioned under, in lots of cases, there isn’t any objectively proper choice, and resolving the disagreement in favor of the human doesn’t at all times result in the optimum consequence.

In a recent Forbes article on AI ethics and autonomous systems, Lance Eliot offered some alternate options for resolving human-machine disagreements as an alternative of defaulting to a view that the human is at all times appropriate:

- The machine’s choice prevails;

- Some predetermined compromise place is adopted;

- One other human is introduced into the decision-making course of; or

- One other machine is introduced into the decision-making course of.

Eliot rightly factors out that over 1000’s of years, societies have developed a number of methods to effectively resolve human-human disagreements, and, the truth is, we regularly design processes to floor such disagreements as a way to foster higher general choice making. Creating an identical system for figuring out and resolving human-machine disagreements will probably be one of many elementary challenges of AI deployment and regulatory oversight within the subsequent 5 years. As mentioned under, for a lot of AI makes use of, it is sensible to require a human to overview a machine’s choices and override it when the human disagrees. However for some choices, that method will lead to extra errors, diminished efficiencies, and elevated legal responsibility dangers, and a special dispute decision framework must be adopted.

Not All Errors Are Equal – False Positives vs. False Negatives

For a lot of choices, there are two several types of errors – false positives and false negatives — and so they might have very totally different penalties. For instance, assume that for each 100 sufferers, a dermatologist can precisely determine a mole as being cancerous 90% of the time. For the sufferers who obtain an incorrect analysis, it might be a lot better if the physician’s mistake is wrongly figuring out a mole as cancerous when it’s not (i.e., a false constructive), quite than wrongly figuring out a cancerous mole as benign (i.e., a false adverse). A false constructive might lead to an pointless biopsy that concludes that the mole is benign, which entails some extra inconvenience and price. However that’s clearly preferable to a false adverse (i.e., a missed most cancers analysis) which might have catastrophic outcomes, together with delayed therapy and even premature demise.

Now suppose {that a} machine that checks images of moles for pores and skin most cancers can also be 90% correct, however as a result of the machine has been educated very in another way from the physician and doesn’t take into account the picture in context of different medical data (e.g., household historical past), the machine makes totally different errors than the physician. What’s the optimum consequence for sufferers when the physician and the machine disagree as as to whether a mole is benign or cancerous? Contemplating the comparatively minor value and inconvenience of a false constructive, the optimum consequence could also be that if both the physician or the machine believes that the mole is cancerous, it will get shaved and despatched for a biopsy. So, including a machine into the decision-making course of and treating it as an equal to the physician will increase the full variety of errors. However as a result of that call decision framework reduces the variety of probably catastrophic errors, the general decision-making course of is improved. If as an alternative, the human’s choice at all times prevailed, there can be instances the place the machine detected most cancers, however the human didn’t, so no biopsy was taken, and the most cancers was found solely later, maybe with extraordinarily adverse implications for the affected person attributable to the delayed analysis. This is able to clearly be a much less fascinating end result, with elevated prices, legal responsibility dangers, and most importantly, affected person hurt.

Not All Selections Are Proper or Unsuitable – Sorting vs. Deciding on

There are occasions when machines make errors. If a semi-autonomous automobile wrongly identifies a harvest moon as a pink site visitors gentle and slams on the brakes, that’s an error, and the human driver ought to be capable to override that incorrect choice. Conversely, if the human driver is closely intoxicated or has fallen asleep, their his driving choices are possible mistaken and shouldn’t prevail.

However many machine choices don’t lend themselves to a binary proper/mistaken evaluation. For instance, take into account credit score and lending choices. A mortgage software from an individual with a really restricted credit score historical past could also be rejected by a human banker, however that mortgage could also be accepted by an AI device that considers non-traditional elements, equivalent to money move transactions from peer-to-peer cash switch apps. For these varieties of selections, it’s tough to characterize both the human or the machine as proper or mistaken. First, if the mortgage is rejected, there isn’t any method to know whether or not it might have been paid off had it been granted, so the denial choice can’t be evaluated as proper or mistaken. As well as, figuring out which view ought to prevail relies on numerous elements, equivalent to whether or not the financial institution is making an attempt to develop its pool of debtors and whether or not false positives (i.e., lending to people who’re more likely to default) carry kind of threat than false negatives (i.e., not lending to people who’re more likely to repay their loans in full).

The False Alternative of Rankings and the Want for Efficient Equivalents

Many AI-based choices symbolize binary sure/no decisions (e.g., whether or not to underwrite a mortgage or whether or not a mole must be examined for most cancers). However some AI techniques are used to prioritize amongst candidates or to allocate restricted assets. For instance, algorithms are sometimes used to rank job candidates or to prioritize which sufferers ought to obtain the restricted variety of organs accessible for transplant. In these AI sorting techniques, candidates are scored and ranked. Nonetheless, as David Robinson factors out in his e book, Voices in the Code, it appears arbitrary and unfair to deal with one particular person as a superior candidate for a kidney transplant if an algorithm gave them a rating of 9.542, when in comparison with an individual with an almost equivalent rating of 9.541. That is an instance of the precision of the rating algorithm creating the phantasm of a significant alternative, when in actuality, the 2 candidates are successfully equal, and another technique must be used to pick between them.

People and Machines Enjoying to Their Strengths and the Promise of Joint Choice Making

Regardless of important effort to make use of AI to enhance the identification of most cancers in mammograms or MRIs, these automated screening instruments have struggled to make diagnostic good points over human physicians. Medical doctors studying mammograms reportedly miss between 15% and 35% of breast cancers, however AI instruments usually underperform medical doctors. The problem of AI for mammogram evaluation is totally different from the pores and skin most cancers screening mentioned above as a result of mammograms have a a lot larger value for false positives; a biopsy of breast tissue is extra invasive, time-consuming, painful, and dear than shaving a pores and skin mole.

Nonetheless, a latest study revealed in The Lancet signifies {that a} advanced joint-decision framework, with medical doctors and AI instruments working collectively, and checking one another’s choices, can result in higher outcomes for the overview of mammograms – each by way of decreasing false positives (i.e., mammograms wrongly categorized as displaying most cancers when no most cancers is current) and decreasing false negatives (i.e., mammograms wrongly categorized as displaying no most cancers when most cancers is current).

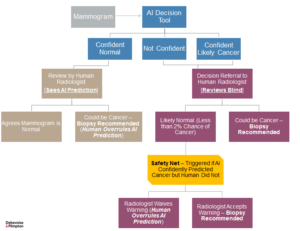

In line with this examine, the prompt optimum workflow entails the machine being educated to kind mammograms into three classes: (1) assured regular, (2) not assured, and (3) assured cancerous:

- Assured Regular: If the machine determines {that a} mammogram is clearly regular (which is true for many instances), that call is reviewed by a radiologist who’s knowledgeable concerning the machine’s earlier choice. If the radiologist disagrees with the machine and believes that most cancers could also be current, then extra testing or a biopsy is ordered. If a biopsy is performed and the outcomes are constructive, then the machine’s choice is considered as a false adverse and the biopsy outcomes are used to recalibrate the machine.

- Not Assured or Assured Cancerous: If the machine determines {that a} mammogram is probably going cancerous, or the machine is unsure as to its choice, then that mammogram is referred to a special radiologist, who isn’t informed which of these two choices the machine made. If the radiologist independently determines that the mammogram is probably going cancerous, then a biopsy is ordered. If the radiologist independently determines that the mammogram is regular, then nothing additional occurs if that mammogram had been beforehand categorized by the machine as “unsure.” If, nevertheless, the radiologist classifies the mammogram as regular however the machine had beforehand determined that the mammogram was possible cancerous, then a “security internet” is triggered, and the radiologist is then warned concerning the inconsistency and requested to overview the mammogram once more (or one other radiologist critiques it). After that second overview, the radiologist can both change their preliminary choice and agree with the machine’s choice to order a biopsy, or overrule the machine and proceed to categorize the mammogram as regular.

This sophisticated workflow achieves superior outcomes as a result of it optimizes the weather of choice making the place every contributor is superior. The machine is a lot better at shortly and persistently figuring out which scans are clearly not fascinating. The radiologists are higher at figuring out which probably fascinating scans are literally fascinating, however the medical doctors, being human, are usually not higher on a regular basis. In sure circumstances (e.g., when the medical doctors are drained, distracted, rushed, and so on.), the machine could also be higher, so a security internet is inserted into the method to seize these conditions and thereby optimize the general choice course of. It is a good instance as to why the workflow of human-machine choices must be tailor-made to the actual drawback. Right here once more, having the human choices at all times prevail wouldn’t obtain one of the best outcomes. Solely by means of a fancy framework, with decision-makers enjoying to their strengths and protecting for one another’s weaknesses (e.g., the machines by no means getting drained or bored), can outcomes be considerably improved.

Making a Framework for Resolving Human-Machine Disagreements

Once more, a lot of the regulatory deal with AI choices is aimed toward requiring people to overview sure choices being made by machines and to appropriate the machine’s errors. For low-stake choices that must be made shortly and in giant quantity, that’s usually the appropriate choice framework, even when it’s not at all times essentially the most correct one. One of many main advantages of AI is pace, and in designing any human-machine choice framework, one have to be cautious to not make insignificant good points in optimizing accuracy at the price of substantial losses in general effectivity.

However in lots of circumstances, the selections being made by AI are considerably impacting folks’s lives, and effectivity is subsequently not as necessary as accuracy or rigor. In these instances when people and machines have a official disagreement, assuming that the human is correct and may prevail isn’t at all times one of the best method. As an alternative, the optimum outcomes come from an evaluation of the actual dispute and the implementation of a decision framework that’s tailor-made to the actual decision-making course of and automatic know-how.

Beneath are some examples of how totally different human-machine choice frameworks could also be acceptable relying on the circumstances.

Possibility #1 – Human within the Loop: The machine critiques numerous candidates and makes an preliminary evaluation by rating them, however the precise choice is made by a human

Choice Examples:

- Who ought to obtain an interview for a selected job.

- Who must be admitted to a selected faculty.

- Which insurance coverage claims must be investigated for potential fraud.

Components:

- The machine is nice at sorting sturdy candidates for choice from weak ones, however isn’t pretty much as good at choosing the right amongst a gaggle of sturdy candidates.

- Vital time is saved by the machine discovering the sturdy candidates from a big pool of weak candidates.

- The ultimate choice choice may be very advanced, with many intangible elements.

- There’s an expectation of human involvement within the closing choice choice by the individuals who’re impacted by that call.

Possibility #2 – Human Over the Loop: The machine makes an preliminary choice with out human involvement, which will be shortly overridden by a human if obligatory

Choice Examples:

- Whether or not a bank card buy was fraudulent and the bank card must be disabled to keep away from additional fraud.

- Whether or not a semi-autonomous car ought to brake to keep away from a collision.

Components:

- The necessity to make numerous choices on an ongoing foundation, and usually, the choice is to do nothing.

- The selections must be made extraordinarily shortly.

- There’s a important threat of hurt from even quick delays in making the proper choice.

- The selections are simply reversible and a human can simply intervene after the machine choice has been made.

- There’s often a transparent proper or mistaken choice that people can shortly confirm.

Possibility #3 – Machine Authority: The machine prevails in a disagreement with a human

Choice Examples:

- AI cybersecurity detection device prevents a human from emailing out a malicious attachment that’s more likely to unfold a pc virus.

- A semi-autonomous supply truck pulls over and stops if it detects that its human driver has fallen asleep or is severely intoxicated.

Components:

- Want for fast choices to stop important hurt.

- Substantial dangers to human security or property if the human is mistaken and far smaller dangers if the machine is mistaken.

- Risk that human choice making is impaired.

Possibility #4 – Human and Machine Equality: If both the human or the machine decides X, then X is finished

Choice Examples:

- Deciding whether or not to ship a probably cancerous mole for a biopsy.

- Which workers ought to obtain an extra safety test earlier than gaining pc entry to extremely confidential firm paperwork.

Components:

- Each machine and human have excessive accuracy, however they make totally different errors.

- The price of both the machine or the human lacking one thing (i.e., a false adverse) is far larger than the price of both the machine or the human flagging one thing that seems to not be a problem (i.e., a false constructive).

Possibility #5 – Hybrid Human-Machine Selections: Human and machine test one another’s choices, generally with out prior information of what the opposite determined; for high-risk choices the place the human and machine clearly disagree, the human (or one other human) is alerted and requested to overview the choice once more.

Choice Examples:

- Assessing mammograms to resolve whether or not they point out the presence of most cancers and whether or not a biopsy must be ordered (mentioned above).

- Reviewing a big quantity of paperwork earlier than producing them to opposing counsel in a litigation or investigation to resolve whether or not they include attorney-client privileged communications.

Components:

- There are important prices for each false positives and false negatives.

- The necessary choice is made in only a few instances, and many of the instances are usually not fascinating.

- The machine is excellent at confidently figuring out instances that aren’t fascinating, and that call will be shortly confirmed by a human, and recalibrated if obligatory.

- There are a big variety of instances the place the machine is unsure as to the proper choice.

- The machine isn’t excellent at figuring out which probably fascinating instances are literally fascinating, however the machine can enhance with extra coaching.

- The human is healthier general at figuring out which probably fascinating instances are literally fascinating, however the human isn’t persistently higher, and at sure instances (when the human is drained, distracted, rushed, and so on.), the machine is healthier.

Conclusion

These examples display that there are a number of viable choices for resolving disputes between machines and people. Typically, circumstances name for a easy workflow, with human choices prevailing. In different instances, nevertheless, a extra sophisticated framework could also be wanted as a result of the people and the machines excel at totally different features of the choice, which don’t simply match collectively.

Within the coming years, efforts to enhance folks’s lives by means of the adoption of AI will speed up. Accordingly, it should develop into more and more necessary for regulators and coverage makers to acknowledge that a number of choices exist to optimize human-machine choice making. Requiring a human to overview each important AI choice, and to at all times substitute their choice for the machine’s choice in the event that they disagree, might unnecessarily constrain sure improvements and won’t yield one of the best leads to many instances. As an alternative, the legislation ought to require AI builders and customers to evaluate and undertake the human-machine dispute-resolution framework that almost all successfully unlocks the worth of the AI by decreasing the dangers of each human and machine errors, bettering efficiencies, and offering acceptable alternatives to problem or study from previous errors. For a lot of instances, that framework will contain human choices prevailing over machines, nevertheless it received’t for all.

To subscribe to the Information Weblog, please click on here.

The Debevoise Artificial Intelligence Regulatory Tracker (DART) is now accessible for purchasers to assist them shortly assess and adjust to their present and anticipated AI-related authorized obligations, together with municipal, state, federal, and worldwide necessities.